What’s Actually New, and What’s Just Branding

Table of contents

“Collaborative AI” is the buzziest term of 2026. Vendors use it. Analysts use it. LinkedIn thought leaders use it. Most of the time it means almost nothing, because the term has been stretched to cover three completely different things at once. A diagram of multiple agents handing tasks to each other gets called collaborative AI. A single user prompting ChatGPT for help gets called collaborative AI. A team using a shared model in a meeting gets called collaborative AI. Three different things, one term. And the thing that actually matters, the thing that is genuinely new about how teams are starting to work with AI, gets buried under the other two. This piece is a working definition. Not the marketing one. The one that lines up with what we actually see when we walk into rooms where teams are doing this well, and what is missing from the rooms where they are not.

The shallow definition (and why it does not help you)

The most common use of “collaborative AI” right now describes a multi-agent architecture. One AI agent generates a draft, hands it to a second agent for review, hands the result to a third for formatting. The agents are collaborating with each other. The diagram is impressive. The phrase has obvious appeal. This is a useful technical pattern. It is not collaboration in any sense that matters for how people work. There are no humans in the loop. The collaboration is between models. Calling this “collaborative AI” is like calling a pipeline “collaborative software.” The work flows through stages, but no one is collaborating. The shallow definition gets worse when it is applied to a single person using a chatbot. Someone types a prompt, the model returns text, the person edits it, sends another prompt. This is not collaboration. It is iterative tool use. Useful, fast, and individual. The output reflects one person’s thinking improved by a model. No one else’s perspective is in the room. If you are looking for what actually changes when AI shows up in a team’s workflow, neither of those definitions will help you.

The working definition

Here is the one that holds up in practice. Collaborative AI is the practice of bringing AI into the room with a team, where it influences collective thinking and output in real time, with shared visibility into how the model is contributing. Three pieces matter, and all three have to be present. In the room with a team. Not one person alone with a chat window. A group, working together, with an AI participating in the work. This could be a workshop, a strategy session, a stand-up, a planning call. The AI is on the screen, not in someone’s pocket. Shapes the team’s collective output in real time. The model is generating, summarizing, surfacing patterns, drafting alternatives.

Whatever the team is producing is being changed by the AI as the team works. Not after the meeting, in someone’s editor. During. Shared visibility into how the model is contributing . This is the part that gets skipped, and it is the part that determines whether the AI helps the team or quietly hurts them. Everyone in the room knows the model is contributing, knows what it has produced, can see what is generated AI versus team thinking, and has the chance to push back. The AI is a participant, not a hidden assistant. When all three are present, you get something that does not happen with individual AI use or multi-agent pipelines. You get a team that can think faster together, with a shared artifact that captures what the model contributed and what the people contributed, and a record of where they pushed back. That is collaborative AI. Everything else is either delegation (one person and a model) or automation (models talking to models).

What collaborative AI looks like when it works

A leadership team gathers to align on a strategic question. The question is on the screen. So is a model. The facilitator runs the team through a structured divergence: each person types a position privately, the model surfaces themes across the responses, the themes go up on the wall. The team sees the patterns the model found and the dissents the model missed. They argue with the model’s framing. They edit the themes. They re-run the synthesis with their corrections. Two hours in, the team has alignment on a position they could not have produced in two hours without the AI. They also have a record of what the model contributed and where they overrode it. The output is theirs. The model accelerated the path to it. Now imagine the same team, same question, without collaborative AI. Three options.

Option A. Each person prepares their position alone, with their own AI assistant. They come to the meeting with polished drafts that look similar because the underlying models trained on similar content. Discussion devolves into refining the most articulate draft instead of surfacing the real disagreement. The model contributed to each person individually. It did not contribute to the team.

Option B. They run the meeting without AI, fill the wall with sticky notes, take photos for the recap, and the synthesis happens later, in someone’s editor, with a model. The synthesis returns from the model and people argue about whether it captured the room. The model is reading, not collaborating.

Option C. They run a multi-agent system that takes meeting transcripts, summarizes them, drafts strategic options. The output looks like collaboration. No one is in the room with the model. The team is consuming AI output, not shaping it. Each option uses AI. Only the first is collaborative AI as the term should be used.

What it requires from teams (and most teams do not have)

The reason collaborative AI works in some rooms and not others has nothing to do with the model. The model is the same. What changes is what the team brings. A facilitator who can hold the room with AI in it. Most facilitation training assumes the facilitator’s job is to manage human dynamics. With AI in the room, the facilitator’s job expands. Who decides when to use the model? When does the model’s output get accepted, and when does it get pushed back on? Who notices when the model is steering the conversation toward a generic framing the team would not have chosen on its own? These are facilitation moves that did not exist three years ago. Teams that have someone who can run them get collaborative AI. Teams that do not, fall back to one of the three options above. Shared norms about transparency.

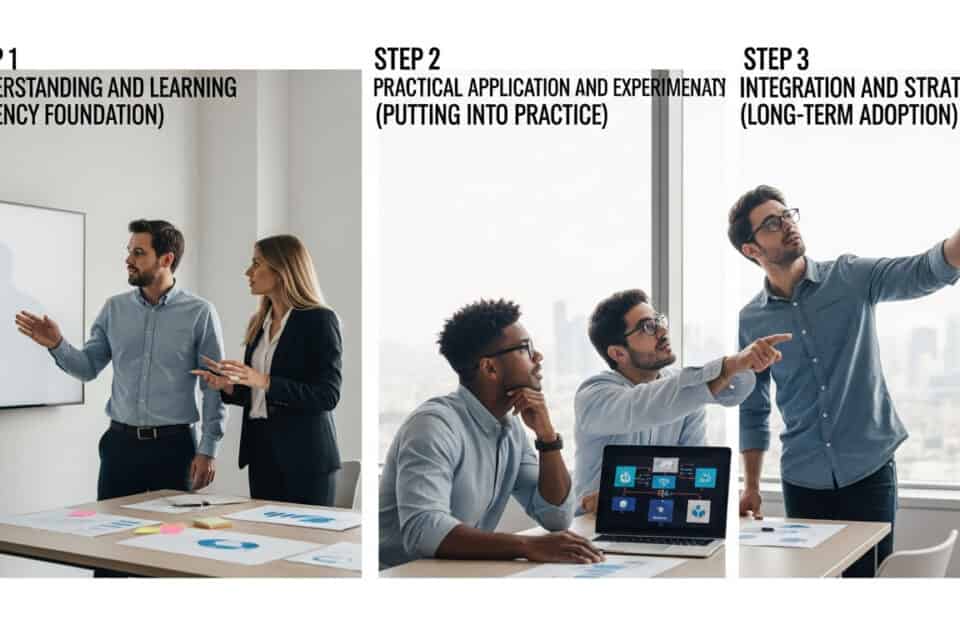

The team has to agree, before the session, on what AI use looks like in the room. Is everyone using it? Are some people privately using it while others are not? Is the model running publicly on the screen, or quietly assisting one person? When AI use is visible, the team can engage with it. When it is hidden, it distorts the room. A working understanding of what the model is good at and what it is not. Models are excellent at synthesis, summarization, divergent generation, and surfacing patterns across text. They are bad at judgment under uncertainty, weighing competing values, and noticing what is missing from a conversation. Teams that know this use the model where it helps and override it where it does not. Teams that do not, drift toward whatever the model recommends. These three capabilities are not technical. They are practices. And practices are slow to build, because they require facilitated repetition.

The branding problem

Most “collaborative AI” content you will read in 2026 will be one of the two shallow definitions, dressed up in language that makes it sound like the working one. Vendors have an incentive to call any AI feature collaborative because the word is selling well. The diagram is collaborative. The chatbot is collaborative. The agent network is collaborative. None of them require what real collaboration requires, which is more than one person in the same room making decisions together. The risk for buyers is straightforward: you procure something labeled collaborative AI, deploy it across the organization, and discover that it is a productivity tool for individuals. People use it alone, at their desks, between meetings. The team-level capability you were trying to build never materializes, because the tool was never going to build it. The capability is built by humans, not software. The good news is that the actual practice of collaborative AI does not require a particular vendor. The model layer is a commodity. What is scarce is the facilitation layer on top, and that is what teams have to build for themselves.

Where this fits in the broader shift

This is one piece of a larger pattern. The friction that matters in 2026 is no longer execution speed. AI eliminated that friction. The friction that matters is consensus, alignment, and trust at the team and organization level. AI accelerates execution; it does not, on its own, build alignment. In some configurations it makes alignment harder, because individual users move so fast that the team cannot keep up. Collaborative AI is the response to that. It is what happens when teams refuse to let AI become a private productivity boost and instead bring it into the room as a shared participant. The benefit is real: faster alignment, better synthesis, decisions that more people genuinely own. The cost is that someone has to facilitate it, and most organizations have not built that capability yet. That is the work in front of leadership teams right now. Not picking the right collaborative AI vendor. Building the team practices that make any AI collaborative.

What to do this quarter

If you are leading a team and want to start moving toward collaborative AI:

Pick one recurring meeting. Not a high-stakes one. A regular planning or review session where the team is already aligned on the format. This is your test environment.

Put a model on the screen. Shared, visible, running. The output of the model goes up where everyone can see it, edit it, push back on it.

Name AI use explicitly. When the model contributes something, say so. When someone overrides it, say so. The transparency is what makes the next session better.

Run it for four weeks. The first session will feel awkward. The second will be better. By the fourth, the team will start to develop instincts about when to invoke the model, when to override it, and how to use it without losing their own judgment. After four weeks, you will know if you have built the practice. You will also know what you need from a facilitator, from governance, and from team training to scale it.

The teams that build this capability now will compound on it for the next decade. The teams that wait for the right vendor or the right tool will still be looking for the right vendor when the friction has moved somewhere else. That is the difference between collaborative AI as branding and collaborative AI as a capability. The branding will keep shifting. The capability is yours once you build it.