I’m still amazed at how many startups don’t have test cases for their code. In fact, many of them have opted out of most devops and developer experience tooling such as continuous integration and automated delivery as well. I do understand that in the early days it is important to quickly deliver value to customers and continuously iterate and test new ideas. However, there must be a balance established between moving fast and moving carefully.

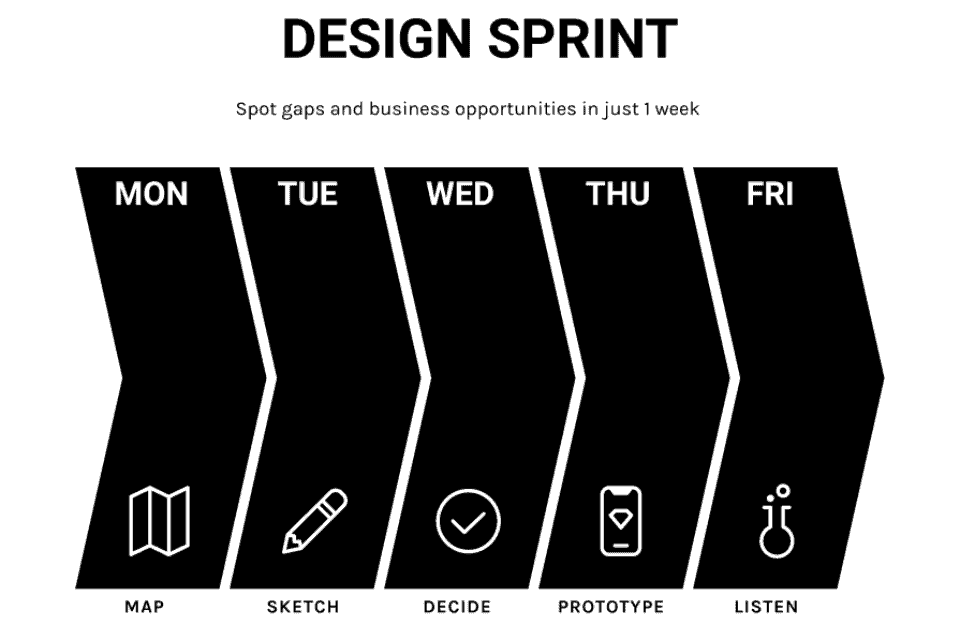

If more companies would conduct upfront research, market validation, and rapid prototyping, maybe they would have enough confidence their code will last to inspire them to move a little slower and follow best practices. After some initial research, running a Design Sprint is my favorite way to help an organization begin embracing the benefits of rapid prototyping and moderated user interviews.

Below are some benefits and approaches to writing test cases that will hopefully help convince you to start writing tests for your existing code base or to include them as you begin building an MVP.

Modular Architecture

When startups are too eager to get a product to market, don’t understand the value in test cases, or are just too lazy to write test cases, they are setting themselves up for a painful testing retrofit in the future. Once you start writing code, it is important to immediately start writing tests and continuously growing and maintaining the test suites.

When teams wait to write tests, they run the risk of writing code that is difficult to test. Once they begin to write tests, they quickly realize that writing the tests requires refactoring the code. This refactoring can sometimes be non-trivial and often includes architectural changes. If the code base has grown considerably without tests, getting to an acceptable amount of code coverage can be time-consuming. When adding tests requires a considerable effort, convincing leadership to support the effort is a challenge. Teams that build tests in parallel with the product, don’t require approval to halt other work to write tests.

Test-Driven Development

Some engineers write tests prior to writing code using a process called Test-Driven Development, however, I find many developers do not work well under this approach. I recommend taking a Test-Driven Development mindset regardless if the tests are written before or alongside the code. The common mistake is waiting much too late to write the test cases. In addition to encouraging more decoupled and testable code, Test-Driven Development leads to fewer bugs and reduces the time to address bugs. Developers quickly determine if their code is working as expected without stepping through a manual process every time they introduce a change, allowing them to check progress after even the smallest change they make.

Don’t wait and test drive the car when is fully assembled at 100 miles an hour.

Regression Suites

A well-maintained regression test suite provides developers with instant feedback when they have unexpectedly introduced a defect in a piece of code that they didn’t actually change. Writing an extensive regression suite including all possible scenarios can be a time-consuming effort, especially for legacy projects. Instead, write a test case whenever a regression is discovered in production. Use the test case to recreate the production issue and only consider the issue resolved when the test case passes. In some cases, this approach actually speeds up the process of recreating the issue and almost always decreases the time required to fix and validate the issue. In addition to these productivity gains, we also now have a new test in our regression suite and can guarantee that this issue won’t slip into production again.

Hot Spots

Most products or systems have hot spots that are brittle and difficult to maintain. Whenever adding to these hot spots, modifying them, or using them in some unique new way, defects are common. With proper annotation on your bug tracking system, it is easy to identify and track these hot spots. When a large percentage of bugs are isolated to specific functionality or libraries, give extra priority to writing tests to address these hotspots. Investing time to build optics will allow you to target the test you write so that you can avoid expensive defects in the future.

Code Coverage

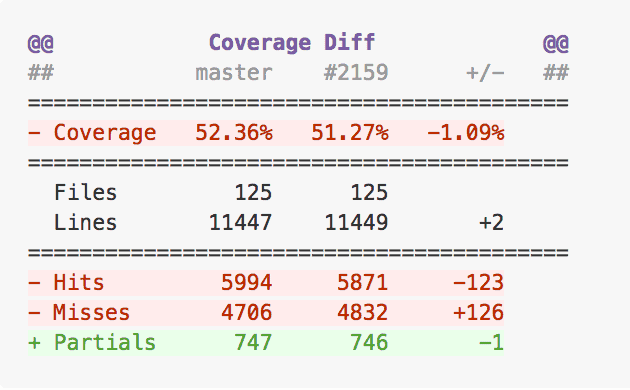

Code coverage is a measure of how much code is exercised when you execute your tests. Most tools display a list of folders with the percent covered and allow you to expand the folders to see coverage for their children. These reports will help you decide where you are your tests are lacking and point you towards new tests to write. Start by adding test cases for files and functions that are part of your most business-critical functionality. When adding code to an existing code base, consider modules that are easy to test or have little control flow first to build out your initial code coverage and expand test development capabilities. Next, focus on code that has complex control flow logic or is difficult to read as this code is likely to be buggy or hard to maintain confidently without tests.

Set a goal of 80% line and 80% file/line coverage. Use a code coverage tool and run it regularly, most teams tie this to their build and get reports on every build or once a day delivered to them via email or slack. Set up alerts and stay diligent on maintaining high code coverage numbers.

Continuous Integration

Continuous integration (CI) is a development practice that requires teams to integrate code into a shared repository regularly. When a developer integrates a new piece of code it is immediately verified by running a test suite as part of an automated build, quickly detecting any problems. Use a CI tool such as CircleCI, Jenkins, Travis, etc to automate the execution of your tests and code coverage to get more leverage from your tests.

Code Reads

It is a common practice to have code reviewed by a peer prior to integrating code into the continuous integration tool. This practice ensures that code readability, formatting, and even defects are identified early before any additional dependent code is built on top that it is expensive to correct. I’m a proponent of auditing the test cases when reviewing code. This is a great time to identify if the author of the changes didn’t include test cases for new code or if the old test cases aren’t adequate. Track issues found during code reads and issues that escaped code reads and should have been found. Encourage the team to be critical, incentivize them to actually find things and hold them accountable.

Social Testing

One of my favorite quality tools it what I call the “Test Fest”. Once a week or so I gather as much of the company as possible together for a social testing session. This practice is great at surfacing usability and visual defects as well as helping the team learn about new capabilities in the product that they haven’t seen yet. Test fest is also an opportunity for the team to share ideas and perspectives to increase empathy and obtain shared understanding.

Rainforest, an automated test platform, did a case study on Twyla’s test fest practice. You can read the case study here.

Conclusion

It’s easy to skip tests early in the excitement to launch code and gather user feedback. If you are starting a new project or if you are a bit further along and don’t have adequate tests, take the time to invest in the quality of your code base. You’ll find that you are more efficient and predictable overall. If you are uncertain if you are building the correct thing and are apprehensive about spending time on tests, consider a Design Sprint. You can get more insightful results in less time and you avoid technical debt.