50% of the skill is gone in 24 hours. Here’s what actually works.

Table of contents

The data is in, and it confirms what many of us suspected: the way most organizations are approaching AI adoption is fundamentally broken. What looks like an AI upskilling problem is actually a design problem, and no amount of additional training will solve it. What looks like an AI upskilling problem is actually a design problem, and no amount of additional training will solve it.

In the same week, two independent reports landed on the same conclusion from completely different angles. Gartner’s Digital Workplace Summit presented research showing that generic AI training produces generic results, that 72% of IT leaders say Copilot users struggle to integrate it into their daily routine, and that collaboration, not individual tool proficiency, is the #2 skill IT workers need right now. Meanwhile, Anthropic released its Economic Index showing that experienced AI users get measurably better results than newcomers, and the gap compounds over time. People who have used AI for six months or more have a 10% higher success rate in their conversations. The longer you use it, the wider the gap gets.

This is not a training problem. This is a design problem.

Why AI Upskilling Fails: The Training Trap

Here is what most organizations are doing: they buy licenses, schedule a training session, maybe run a webinar series, and call it done. Gartner’s data shows exactly what happens next. License counts rise. Active daily usage stays flat. Within a day, employees have lost 50% of what they learned. After six days, 90% is gone.

That is not a failure of the training content. It is a failure of the approach. You cannot teach AI fluency in a classroom any more than you can teach someone to swim by showing them a PowerPoint about water.

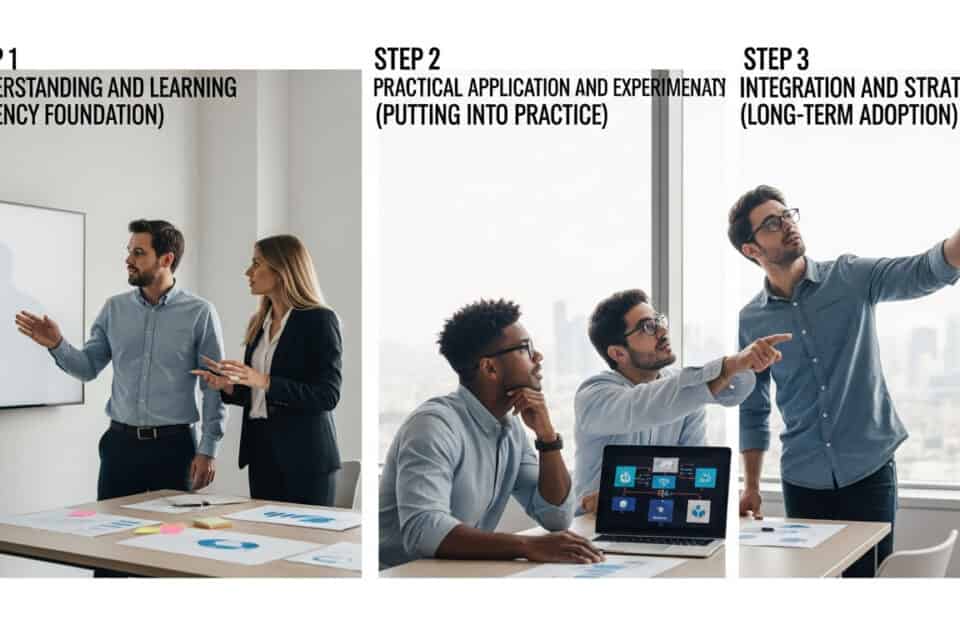

AI fluency is not taught. It is sparked. Nobody learns something they do not want to learn, and nobody retains a skill they do not practice immediately in the context of their actual work. The most common misconception we encounter when a client first engages us: that training is a one-and-done experience. That a small training event is all that might be needed for change. The reality is that AI upskilling that holds comes from stacking small and deliberate work over time, not from a single workshop.

The Anthropic data makes this even sharper. Their report studied over a million conversations and found that the gap between experienced and new users is not explained by what tasks they are doing, what country they are in, or what model they are using. It is explained by how they interact. Experienced users do not just delegate tasks. They iterate, push back, validate, and learn. They treat AI as a collaborator, not a vending machine.

That is a skill that gets built through practice, not instruction.

The Perception Gap Nobody Is Talking About

Gartner surfaced a stat at the Digital Workplace Summit that should alarm every executive reading this: executives are four times more likely to report high AI productivity gains. Individual contributors are five times more likely to say AI made no difference.

Read that again. The people making the adoption decisions and the people doing the adoption are living in different realities.

This is not a technology gap. It is a perception gap, and it is driven by something deeper than skill level. When four out of five employees believe their organization is trying to replace them with AI, and only 12% feel involved in the decisions about how AI gets used, you do not have a training problem. You have a trust problem. And no amount of lunch-and-learn sessions will fix it.

Consider what this looks like on the ground. A VP of digital transformation rolls out an AI copilot and sees her own productivity jump. She assumes everyone else is having the same experience. Meanwhile, 78% of employees do not even know whether they will lose their job to AI. They are not experimenting with the tool. They are watching it with suspicion, trying to figure out what it means for them. The same technology that feels like a superpower to the executive feels like a threat to the person three levels down.

We see this pattern constantly. Teams do not resist AI because they lack skills. They resist because they do not have a vision for what purposeful adoption looks like, and they do not feel they have agency in it because they were not included. It is a mixture of capability gap and design gap, and the design gap is the one nobody is addressing.

The organizations seeing real value from AI share one characteristic that the others do not: alignment. Gartner found that organizations with business-IT-executive alignment on what problems AI should solve are three times more likely to report significant value. Only 14% of organizations have that alignment today. That is not a technology gap. That is a conversation that has not happened yet.

The Experience Starvation Problem

There is a more insidious consequence of getting AI adoption wrong, and most leaders are not seeing it yet.

When senior people use AI to do junior work faster, they are not just being more productive. They are removing the on-ramps that junior employees need to develop expertise. Gartner calls this “experience starvation.” The expert uses AI to absorb tasks that used to be the proving ground for new hires. The new hire never gets the reps. The pipeline for developing the next generation of talent quietly breaks.

Think about what this means in practice. A senior analyst who once delegated data cleaning to a junior team member now does it herself in minutes with AI. The junior analyst never learns the structure of the data, never develops the intuition that comes from wrestling with messy inputs. The senior person is more productive. The junior person is more expendable. And the organization has quietly eliminated the apprenticeship model that built its bench strength.

This is already showing up in the data. Anthropic’s report found that job-finding rates for 22-to-25-year-olds in AI-exposed occupations have dropped 14% compared to 2022. Software developer employment in that age cohort has declined roughly 20% from its late-2022 peak. The junior roles are not being automated away by AI. They are being absorbed by seniors who now have AI doing the work that used to be someone else’s learning curve.

There is a troubling feedback loop here as well. Anthropic’s researchers found that developers who used AI assistance scored 50% on follow-up knowledge assessments, compared to 67% for those who coded by hand. The tool makes you faster today while potentially making you less capable tomorrow, unless the learning environment is designed to counteract that effect.

Gartner projects that 56% of CEOs will use AI to de-layer middle management within five years. The question is not whether the org chart is going to flatten. It is whether anyone is designing what replaces the development pathways that disappear when it does.

The AI-Fluent Island Problem

Here is something we did not expect to find, but now see repeatedly: the teams with the most AI-fluent individuals are not always the teams getting the most value.

When a few people on a team develop real AI proficiency while everyone else stays at the basics, something counterintuitive happens. The fluent members pull ahead in their individual work, but they cannot embed what they are learning back into the team. They are producing faster, thinking differently, using AI as a genuine thought partner, but the team’s processes, meetings, and decision-making structures have not changed. The fluent members end up on an island.

In some ways, this is worse than universal low adoption. At least when nobody is using AI, the team is aligned in their way of working. When a few members leap ahead without the collaborative infrastructure to support it, you get fragmentation. The AI-fluent people get frustrated because they can see what is possible but cannot bring the team along. The rest of the team feels left behind or skeptical. The organization gets pockets of individual productivity gains that never compound into team-level or org-level value.

This is the single biggest blind spot in the “train the champions” approach that many organizations default to. Champions without a collaborative model just become isolated experts.

Why Collaboration Is the Missing Layer

Here is the part that most AI adoption strategies completely miss: the highest-value applications of AI are not individual. They are collaborative.

Gartner’s research ranks collaboration as the #2 skill IT workers need, at 47%, right behind AI/GenAI itself at 53%. That is not a coincidence. As AI handles more of the execution work, the human work that remains is increasingly about alignment, decision-making, and working across functions. The ability to think together becomes more important precisely because the machines handle more of the thinking alone.

The Anthropic data reinforces this from a different angle. Their report distinguishes between “automation” (delegating a task to AI) and “augmentation” (using AI as a thought partner for more complex, creative, or strategic work). On the consumer platform, augmentation already accounts for 53% of usage. Experienced users disproportionately favor augmentation over pure automation. They have learned that the real value is not in having AI do something for you. It is in having AI think with you.

But thinking with AI is a multiplayer activity. When a team uses AI to generate options, stress-test a strategy, or prototype a solution, the output is only as good as the process that surrounds it. More inputs and faster inputs can actually slow alignment down if the process is broken. A team that cannot align on a decision without AI is not going to align any faster with it. They are just going to generate more options to disagree about.

This is where most organizations have a gap they cannot see. They are investing in individual AI skills while ignoring the collaborative infrastructure that makes those skills productive at scale. They are optimizing the nodes while neglecting the network.

What Real AI Upskilling Looks Like

The organizations that are getting real value from AI are not running better training programs. They are redesigning how teams work together. That is what AI upskilling actually looks like in practice.

The shift that matters is moving from AI as a tool to AI as a toolmate, a participant in the collaborative process rather than something individuals use in isolation. This shift is still so new that most teams do not have models for it yet. “Where do we start beyond the single-player approach?” is the question we hear most often. But when you provide those models, when you show teams what collaborative AI actually looks like in practice, excitement builds fast. People can suddenly see what is possible.

We saw this recently with a client whose previous AI training had focused entirely on individual use cases. Adoption was uneven, value was scattered, and the team could not connect their individual AI experiments to meaningful outcomes. When we introduced collaborative AI and AI toolmates, working with AI as a team rather than as individuals, it was a major unlock. Both the teams and executives saw the shift in real time. The difference was not better training. It was a fundamentally different model for how AI gets used.

Different roles also need fundamentally different AI strategies. Experts need AI that extends their capacity. People still building expertise need AI that accelerates their learning without starving them of foundational experience. A one-size-fits-all training program is the opposite of what any of them need.

The Anthropic data points to the same conclusion from the user behavior side. Their researchers found that high-tenure users actually grant AI lower autonomy, not higher. They stay more involved, iterate more, and get better results because of it. The best AI users are not the ones who have learned to delegate everything. They are the ones who have learned when to push back, when to redirect, and when to go deeper.

That kind of fluency does not come from a training module. It comes from practice in a structured environment, with feedback, with real stakes, and ideally with other people learning alongside you. Think of it as AI fitness, not AI training. A gym metaphor rather than a classroom metaphor. You do not get fit by attending a lecture about exercise. You get fit by showing up consistently and doing the work.

The Widening Gap

The urgency here is not abstract. It is compounding.

Anthropic’s data shows that the skills gap between experienced and new AI users is hardening into something more structural. Washington, D.C., where the population skews highly educated, has AI adoption rates four times what you would expect for a city of its size. Globally, the top 20 countries account for 48% of per-capita AI usage, and that concentration is increasing. The people who started early are pulling further ahead. The organizations that figured this out first are building advantages that will be very difficult to close.

Gartner predicts that by 2027, 75% of hiring processes will include AI proficiency testing. At the same time, the atrophy of critical thinking skills due to GenAI use is already pushing organizations toward “AI-free” skills assessments. The workforce is bifurcating: people who can work with AI as a genuine collaborator, and people who either cannot use it effectively or have let it do their thinking for them.

59% of the workforce needs brand new skills in the next two to three years. That is not a number that gets solved by scaling up existing training approaches. It requires a fundamentally different design.

The Design Problem

The question is not “how do we train people on AI.” The question is “how do we redesign how teams work together when half the team is agents.” That reframing is the gap between AI training and real AI upskilling.

That is a facilitation challenge, not a technology challenge. It requires someone who understands how groups make decisions, how trust gets built (and broken), how to create the conditions for people to develop new capabilities through practice rather than instruction.

32 million jobs will be transformed per year due to AI. Gartner estimates that managing this transformation requires 20 times more organizational effort than managing job losses. That is the single most important stat in all of this research. The hard part is not the technology. It is the organizational design work that makes the technology productive.

Today, 80% of IT work is done by humans without AI. By 2030, Gartner projects that 75% will be done by humans with AI, and 25% by AI alone. That transition does not happen through training programs. It happens through deliberate redesign of how people and AI work together, role by role, team by team, process by process.

The organizations that treat AI adoption as a training problem will keep buying licenses that do not get used, running workshops that do not stick, and watching the gap between their AI-fluent employees and everyone else widen. The organizations that treat it as a design problem, one that requires rethinking collaboration, decision-making, and how people learn together, will be the ones that capture the real value.

The tools are ready. The question is whether your organization is designed to use them.

If you are rethinking how your teams work with AI and want to explore what a design-first approach to AI upskilling looks like, let’s talk.