Turning AI uncertainty into practice with the Edge Maps

Table of contents

- Predictable Change, Unpredictable Edges

- Why Enterprise AI Adoption Stalls

- Reframing AI as a Shoreline, Not a Cliff

- The Missing Layer: Navigating AI Edges

- Introducing the Edge Maps: An 8-Minute Adoption Accelerator

- Applying Edges Thinking to Enterprise AI Adoption at Scale

- From Map to Movement: Prototyping the Smallest Real Step

- Crossing the Shoreline

Enterprise AI adoption isn’t a roadmap problem. It’s an edge problem.

Across organizations, AI initiatives are accelerating — pilots are multiplying, tools are proliferating, and policies are emerging in parallel. Executive teams are crafting AI strategies. Boards are asking about posture and readiness. Departments are experimenting with copilots and automation.

Yet many leadership teams feel the same tension: adoption is uneven, alignment is fragile, and anxiety lingers beneath the surface.

What’s often missing isn’t strategy. It’s a way to navigate the edges AI creates.

Edges aren’t problems to solve. They’re thresholds: places where something new is trying to emerge. When AI enters workflows, it doesn’t just add capability; it reshapes roles, decision rights, operating rhythms, and expectations. That reshaping generates friction. And friction, when unnamed, becomes resistance.

When named and structured, it becomes movement.

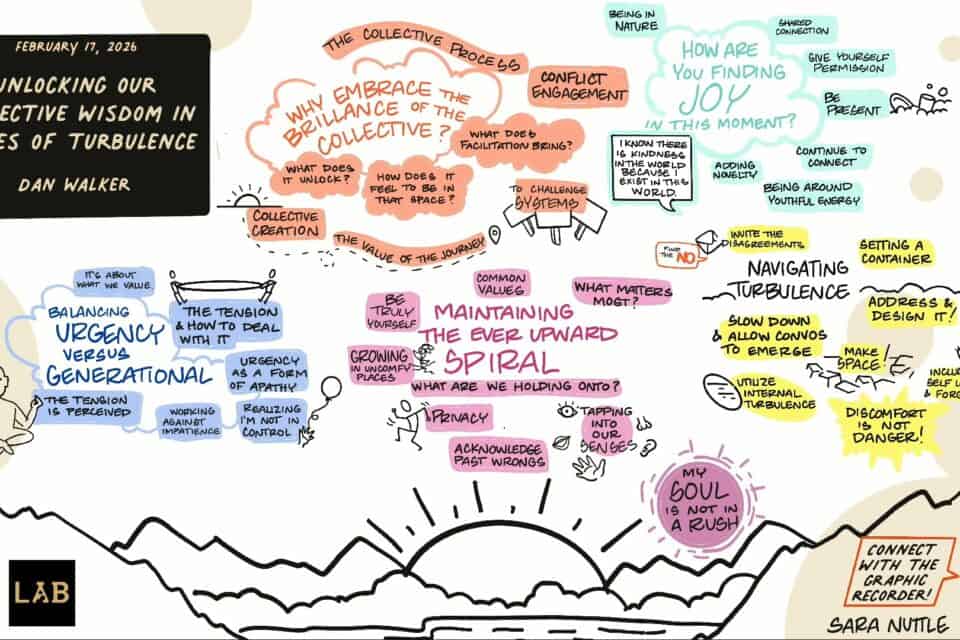

At our February summit, we debuted a simple tool called the Edge Maps and used it live with 150 leaders, many of them navigating AI adoption in their organizations. In eight focused minutes, the room surfaced present realities, named thresholds, and committed to small, reversible experiments. The energy shifted from ambient overwhelm to organized momentum.

This article explores why enterprise AI adoption stalls at the edge and how a lightweight, structured approach can turn tension into forward motion.

Predictable Change, Unpredictable Edges

As February winds down, I’m reminded of a rhythm my wife lives with every year. She runs a garden center, and each spring the staff nearly triples. The ramp-up is expected. It’s seasonal. It’s planned.

And yet, every year feels different.

The mix of people shifts. Regulations change. Customer behavior evolves. Some seasonal employees return; many don’t. Training needs are familiar in shape but new in detail. Even when the pattern is predictable, the edge itself is not identical.

The edge is recurring, but never the same.

Enterprise AI adoption operates in much the same way.

You know AI waves are coming. You anticipate expansion. You build pilots. You set budgets. You hold strategy sessions.

- A new team integrating AI into a legacy workflow.

- A new compliance interpretation that shifts what’s permissible.

- A new executive expectation to demonstrate measurable ROI.

- A new tension between efficiency and craftsmanship.

- A new layer of role ambiguity.

The edge isn’t a surprise.

The shape of it is.

And because the shape changes, organizations can’t rely on static plans alone. They need a navigational practice — something that helps teams repeatedly step into uncertainty without freezing or overcorrecting.

Why Enterprise AI Adoption Stalls

Most AI strategies begin with tools, policies, or training plans. Those matter. But they don’t address the underlying edges teams are standing on.

Common enterprise AI edges look like this:

- Human vs. AI decision authority

- Tool sprawl and workflow fragmentation

- Governance bottlenecks

- Ethical ambiguity

- Role rebundling and job anxiety

- Measurement confusion — what does “AI success” even mean?

These aren’t purely technical issues. They’re transitional states.

And transitional states create psychological and operational edges.

At the executive layer, enthusiasm is often high. AI is framed as a competitive necessity or strategic imperative.

At the middle layer, uncertainty surfaces:

- Who approves experiments?

- Which tools are sanctioned?

- How do we manage risk?

- What metrics matter most?

- Who is accountable if something goes wrong?

At the frontline, experimentation frequently happens quietly. Individuals test tools on their own, unsure whether their usage is encouraged or merely tolerated.

Legal and governance teams, tasked with managing exposure, can become perceived blockers, not because they oppose innovation, but because there are no structured lanes for safe exploration.

Without a structured way to name and navigate these thresholds, organizations default to one of three patterns:

- Debate hypotheticals endlessly.

- Freeze in policy design.

- Launch large initiatives without safe learning loops.

The result? AI remains either an isolated productivity hack or a top-down mandate — not a coordinated, trust-building transformation.

What’s missing is a navigational layer.

Reframing AI as a Shoreline, Not a Cliff

When we hear “edge,” our bodies brace for a fall. It feels like a cliff that is irreversible and risky.

But what if enterprise AI is more like a shoreline?

Shorelines are dynamic. They shift daily. They invite navigation. They require rhythm, awareness, and adjustment — not panic.

This metaphor matters because it shifts energy from fear to curiosity. From avoidance to orientation.

Leaders can accelerate this shift by explicitly naming AI-related edges at the start of a meeting:

“We’re at the edge of redefining review workflows with AI.”

“We’re at the threshold of clarifying human vs. AI drafting roles.”

“We’re navigating the edge of safe AI-in-use.”

Naming the edge normalizes uncertainty without amplifying fear.

From there, you invite a consent-based experiment: time-boxed, safe-to-try, and small-but-real.

That move alone often transforms a session from:

“We might break something.”

to:

“We’re here to learn together.”

Closers matter just as much as openers. If you name an edge and run an experiment, close by harvesting learning, confirming ownership, and setting the next check-in. In this way, AI adoption becomes rhythmic rather than episodic.

Decision rules and working agreements become critical here. Edges produce ambiguity; decision rules clarify how you move within it. Working agreements make safety visible: how we’ll speak, pause, decide, and adjust.

Together, they form the container that makes AI transformation navigable.

The Missing Layer: Navigating AI Edges

AI is reshaping work in real time, and many organizations are experiencing multiple edges simultaneously:

- Tool selection and integration

- Policy and ethics

- Training and enablement

- Role evolution

- Operating rhythms

- Measures of success

For many teams, AI has become background anxiety, visible but hard to grasp.

The solution isn’t more slides.

It’s structured, small-scale experimentation.

It’s useful to treat AI like the weather. You forecast, prepare, and choose your route accordingly. Some days you sprint. On others you seek cover and regroup.

Practically, that means:

- Define the edge AI is presenting.

- Name it clearly.

- Identify the minimum viable experiment you can run to safely learn.

Minimum viable experiments create maximum alignment because they replace speculation with shared evidence.

Language is a lever here. Instead of “AI risk policy,” try “Safer AI-in-use.” Instead of “AI productivity targets,” try “Co-shaping AI-accelerated workflows.” Verbs like “co-shape,” “test,” “pilot,” and “harvest” nudge teams toward progression rather than perfection.

And while naming matters, don’t let it delay action. Begin exploration and refine language as you go. A named threshold becomes a door people can walk through together.

Introducing the Edge Maps: An 8-Minute Adoption Accelerator

This is where the Edge Maps comes in.

At the summit, we used it to help participants surface AI-related edges and convert them into tangible next steps. In eight minutes, participants lined up present realities, named a threshold, envisioned the near future, and identified the smallest real actions to cross it.

The room’s energy shifted from overwhelmed to organized.

When edges become visible and legible, they become navigable.

After two days of deep practice and dialogue, participants were already holding powerful insights about facilitation, emergence, and AI-shaped work. The Edge Maps offered something different — a structured moment of reflection. It created space to pause, assess what was emerging, and decide how these ideas would translate into practice. For some, that meant facilitation experiments. For others, it meant operational shifts. And for many, it meant clarifying how they would bring AI adoption back into their teams with intention rather than urgency. Within minutes of mapping Present, Threshold, and Future, something tightened and clarified. Edges that felt expansive became specific. Possibilities became prototypes. Energy became ownership. Participants weren’t solving AI adoption in eight minutes. They were converting insight into commitment. That’s the difference.

Here’s the essence of the tool:

- Use one row per edge, up to four.

- Move left to right through three fields: Present, Threshold, Future.

- Diverge in Present. Converge to name the Threshold. Close with tangible Future actions.

- Keep it fast — eight minutes by design.

In the Present field, begin with strengths, resources, and curiosities. This regulates the nervous system, especially when AI carries risk or ambiguity. Then acknowledge tensions and constraints.

That pairing — strength plus reality — creates confident curiosity rather than brittle optimism or fear.

Naming the Threshold is the fulcrum. Give it a discussable name. Then define small but real actions to step into and through it. Keep steps reversible.

In the Future field, articulate how it will feel once crossed, what you’ll be doing differently, and how you’ll know you’re there.

The result is a compact artifact that converts ambient AI worry into a trackable learning plan.

Applying Edges Thinking to Enterprise AI Adoption at Scale

Enterprise AI adoption isn’t a single edge. It’s a system of nested thresholds.

Strategic edges sit at the leadership layer.

Operational edges emerge in divisions.

Workflow edges surface inside teams.

Identity edges show up at the individual level.

The Edge Maps cascades effectively across levels:

- Leadership maps organizational edges across policy, enablement, ethics, and operations.

- Divisions map local thresholds shaped by their workflows.

- Teams identify role-level experiments that are small and reversible.

Balance top-down clarity with bottom-up learning.

Leadership sets guardrails:

- Data privacy boundaries

- Ethical commitments

- Reporting expectations

Teams co-shape experiments within those guardrails.

As local experiments produce wins, codify them into shared rituals, templates, and case studies. Innovation spreads without chaos.

Role clarity becomes a multiplier:

- Who owns AI enablement?

- Who stewards policy evolution?

- Who curates internal case studies?

- Who unlocks scale resources?

Consent-based trials reduce fear and increase participation. When people know experiments are time-bound and reversible, they’re more willing to engage.

Visibility accelerates adoption. Choose harvest formats that travel — brief write-ups, short demos, annotated templates. Make learning public and portable.

We’ve seen enterprise AI efforts transform simply by making experimentation legible.

From Map to Movement: Prototyping the Smallest Real Step

A map only matters if you move.

Convert at least one Future statement into a prototype this week.

Small. Real. Reversible.

“Pilot a daily AI stand-up for two weeks” beats “launch an AI initiative.”

“Draft a one-page AI-in-review guideline” beats “complete enterprise framework.”

Before starting, define:

- Safety signals: What tells us this is safe and responsible?

- Success signals: What tells us this is useful?

- Pivot protocol: How will we decide to continue, change, or stop?

Agreeing on pivot rules in advance reduces emotional friction and strengthens trust.

Book the next check-in before leaving the room. Close each session with owner, due date, and smallest viable action.

Rotate an “edge steward” role if helpful — someone who keeps the threshold visible and curates learning. Over time, experimentation becomes habit rather than event.

That’s when AI adoption shifts from initiative to capability.

Crossing the Shoreline

Enterprise AI adoption isn’t about eliminating uncertainty. It’s about building capacity to move within it.

Edges are invitations. They mark the place where capability wants to grow.

The Edge Maps provides a lightweight navigational layer — one that makes tension legible, experiments safe, and learning visible.

Name the threshold. Build a container. Take the smallest real next step together.

The shoreline is in sight.

Now move.